CCNP Switch: Aggregating Switch Links

Switch Port Aggregation with EtherChannel

As discussed in Chapter 5, “Switch Port Configuration,” switches can use Ethernet, Fast Ethernet, or Gigabit Ethernet ports to scale link speeds by a factor of ten. Cisco offers another method of scaling link bandwidth by aggregating, or bundling, parallel links, termed the EtherChannel technology. Two to eight links of either Fast Ethernet (FE) or Gigabit Ethernet (GE) are bundled as one logical link of Fast EtherChannel (FEC) or Gigabit EtherChannel (GEC), respectively.

This bundle provides a full-duplex bandwidth of up to 1600 Mbps (eight links of Fast Ethernet) or 16 Gbps (eight links of Gigabit Ethernet).

This also provides an easy means to “grow,” or expand, a link’s capacity between two switches, without having to continually purchase hardware for the next magnitude of throughput. For example, a single FastEthernet link (200 Mbps throughput) can be incrementally expanded up to eight Fast Ethernet links (1600 Mbps) as a single Fast EtherChannel. If the traffic load grows beyond that, the growth process can begin again with a single Gigabit Ethernet link (2 Gbps throughput), which can be expanded up to eight Gigabit Ethernet links as a Gigabit EtherChannel (16 Gbps). The process repeats again by moving to a single 10-Gigabit Ethernet link, and so on. Ordinarily, having multiple or parallel links between switches creates the possibility of bridging loops, an undesirable condition. EtherChannel avoids this situation by bundling parallel links into a single, logical link, which can act as either an access or a trunk link. Switches or devices on each end of the EtherChannel link must understand and use the EtherChannel technology for proper operation.

Although an EtherChannel link is seen as a single logical link, the link doesn’t necessarily have an inherent total bandwidth equal to the sum of its component physical links. For example, suppose an FEC link is made up of four full-duplex, 100-Mbps Fast Ethernet links. Although it is possible for the FEC link to carry a total throughput of 800 Mbps (if each link becomes fully loaded), the single resulting FEC bundle does not operate at this speed.

Instead, traffic is distributed across the individual links within the EtherChannel. Each of these links operates at its inherent speed (200 Mbps full duplex for FE) but carries only the frames placed on it by the EtherChannel hardware. If one link within the bundle is favored by the loaddistribution algorithm, that link will carry a disproportionate amount of traffic. In other words, the load isn’t always distributed equally among the individual links. The load-balancing process is explained further in the next section.

EtherChannel also provides redundancy with several bundled physical links. If one of the links within the bundle fails, traffic sent through that link automatically is moved to an adjacent link. Failover occurs in less than a few milliseconds and is transparent to the end user. As more links fail, more traffic is moved to further adjacent links. Likewise, as links are restored, the load automatically is redistributed among the active links.

Bundling Ports with EtherChannel

EtherChannel bundles can consist of up to eight physical ports of the same Ethernet media type and speed. Some configuration restrictions exist to ensure that only similarly configured links are bundled.

Generally, all bundled ports first must belong to the same VLAN. If used as a trunk, bundled ports must be in trunking mode, have the same native VLAN, and pass the same set of VLANs. Each of the ports should have the same speed and duplex settings before being bundled. Bundled ports also must be configured with identical spanning-tree settings.

Distributing Traffic in EtherChannel

Traffic in an EtherChannel is distributed across the individual bundled links in a deterministic fashion; however, the load is not necessarily balanced equally across all the links. Instead, frames are forwarded on a specific link as a result of a hashing algorithm. The algorithm can use source IP address, destination IP address, or a combination of source and destination IP addresses, source and destination MAC addresses, or TCP/UDP port numbers. The hash algorithm computes a binary pattern that selects a link number in the bundle to carry each frame.

If only one address or port number is hashed, a switch forwards each frame by using one or more low-order bits of the hash value as an index into the bundled links. If two addresses or port numbers are hashed, a switch performs an exclusive-OR (XOR) operation on one or more loworder bits of the addresses or TCP/UDP port numbers as an index into the bundled links.

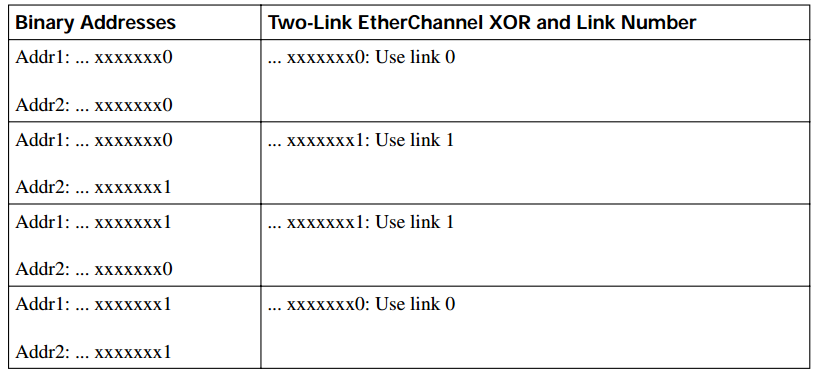

For example, an EtherChannel consisting of two links bundled together requires a 1-bit index. If the index is 0, link 0 is selected; if the index is 1, link 1 is used. Either the lowest-order address bit or the XOR of the last bit of the addresses in the frame is used as the index. A four-link bundle uses a hash of the last 2 bits. Likewise, an eight-link bundle uses a hash of the last 3 bits. The hashing operation’s outcome selects the EtherChannel’s outbound link. Table 8-2 shows the results of an XOR on a two-link bundle, using the source and destination addresses.

The XOR operation is performed independently on each bit position in the address value. If the two address values have the same bit value, the XOR result is always 0. If the two address bits differ, the XOR result is always 1. In this way, frames can be distributed statistically among the links with the assumption that MAC or IP addresses themselves are distributed statistically throughout the network. In a four-link EtherChannel, the XOR is performed on the lower 2 bits of the address values, resulting in a 2-bit XOR value (each bit is computed separately) or a link number from 0 to 3.

Table 8-2 Frame Distribution on a Two-Link EtherChannel

As an example, consider a packet being sent from IP address 192.168.1.1 to 172.31.67.46. Because EtherChannels can be built from two to eight individual links, only the rightmost (least significant) 3 bits are needed as a link index. From the source and destination addresses, these bits are 001 (1) and 110 (6), respectively. For a two-link EtherChannel, a 1-bit XOR is performed on the rightmost address bit: 1 XOR 0 = 1, causing Link 1 in the bundle to be used. A four-link EtherChannel produces a 2-bit XOR: 01 XOR 10 = 11, causing Link 3 in the bundle to be used. Finally, an eight-link EtherChannel requires a 3-bit XOR: 001 XOR 110 = 111, where Link 7 in the bundle is selected.

A conversation between two devices always is sent through the same EtherChannel link because the two endpoint addresses stay the same. However, when a device talks to several other devices, chances are that the destination addresses are distributed equally with 0s and 1s in the last bit (even and odd address values). This causes the frames to be distributed across the EtherChannel links.

Note that the load distribution is still proportional to the volume of traffic passing between pairs of hosts or link indexes. For example, suppose that there are two pairs of hosts talking across a two-link channel, and each pair of addresses results in a unique link index. Frames from one pair of hosts always travel over one link in the channel, while frames from the other pair travel over the other link. The links both are being used as a result of the hash algorithm, so the load is being distributed across every link in the channel.

However, if one pair of hosts has a much greater volume of traffic than the other pair, one link in the channel will be used much more than the other. This still can create a load imbalance. To remedy this condition, you should consider other methods of hashing algorithms for the channel. For example, a method that uses the source and destination addresses along with UDP or TCP port numbers can distribute traffic much differently. Then, packets are placed on links within the bundle based on the applications used within conversations between two hosts.

Configuring EtherChannel Load Balancing

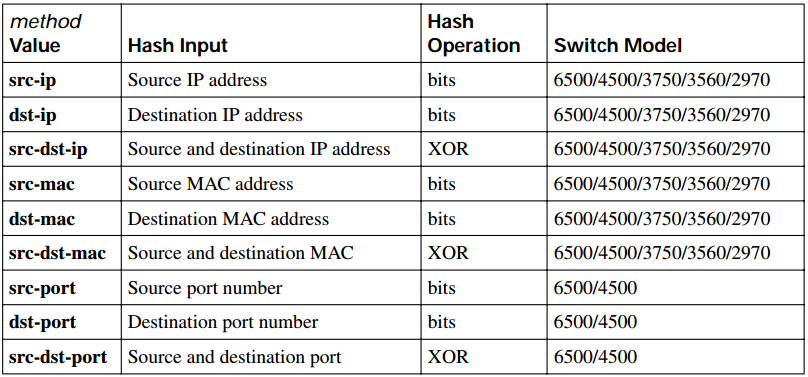

The hashing operation can be performed on either MAC or IP addresses and can be based solely on source or destination addresses, or both. Use the following command to configure frame distribution for all EtherChannel switch links:

Switch(config)# port- channel load- balance method

Notice that the load-balancing method is set with a global configuration command. You must set the method globally for the switch, not on a per-port basis. Table 8-3 lists the possible values for the method variable, along with the hashing operation and some example supporting switch models.

Table 8-3 Types of EtherChannel Load-Balancing Methods

The default configuration is to use source XOR destination IP addresses, or the src-dst-ip method. The default for the Catalyst 2970 and 3560 is src-mac for Layer 2 switching. If Layer 3 switching is used on the EtherChannel, the src-dst-ip method always will be used, even though it is not configurable. Normally, the default action should result in a statistical distribution of frames; however, you should determine whether the EtherChannel is imbalanced according to the traffic patterns present. For example, if a single server is receiving most of the traffic on an EtherChannel, the server’s address (the destination IP address) always will remain constant in the many conversations.

This can cause one link to be overused if the destination IP address is used as a component of a load-balancing method. In the case of a four-link EtherChannel, perhaps two of the four links are overused. Configuring the use of MAC addresses, or only the source IP addresses, might cause the distribution to be more balanced across all the bundled links.

TIP To verify how effectively a configured load-balancing method is performing, you can use the show etherchannel port-channel command. Each link in the channel is displayed, along with a hex “Load” value. Although this information is not intuitive, you can use the hex values to get an idea of each link’s traffic loads relative to the others.

In some applications, EtherChannel traffic might consist of protocols other than IP. For example, IPX or SNA frames might be switched along with IP. Non-IP protocols need to be distributed according to MAC addresses because IP addresses are not applicable. Here, the switch should be configured to use MAC addresses instead of the IP default.

TIP A special case results when a router is connected to an EtherChannel. Recall that a router always uses its burned-in MAC address in Ethernet frames, even though it is forwarding packets to and from many different IP addresses. In other words, many end stations send frames to their local router address with the router’s MAC address as the destination. This means that the destination MAC address is the same for all frames destined through the router.

Usually, this will not present a problem because the source MAC addresses are all different. When two routers are forwarding frames to each other, however, both source and destination MAC addresses remains constant, and only one link of the EtherChannel is used. If the MAC addresses remain constant, choose IP addresses instead. Beyond that, if most of the traffic is between the same two IP addresses, as in the case of two servers talking, choose IP port numbers to disperse the frames across different links.

You should choose the load-balancing method that provides the greatest distribution or variety when the channel links are indexed. Also consider the type of addressing that is being used on the network. If most of the traffic is IP, it might make sense to load-balance according to IP addresses or TCP/UDP port numbers. But if IP load balancing is being used, what happens to non-IP frames? If a frame can’t meet the load-balancing criteria, the switch automatically falls back to the “next lowest” method. With Ethernet, MAC addresses always must be present, so the switch distributes those frames according to their MAC addresses.

A switch also provides some inherent protection against bridging loops with EtherChannels. When ports are bundled into an EtherChannel, no inbound (received) broadcasts and multicasts are sent back out over any of the remaining ports in the channel. Outbound broadcast and multicast frames are load-balanced like any other: The broadcast or multicast address becomes part of the hashing calculation to choose an outbound channel link.

EtherChannel Negotiation Protocols

EtherChannels can be negotiated between two switches to provide some dynamic link configuration. Two protocols are available to negotiate bundled links in Catalyst switches. The Port Aggregation Protocol (PAgP) is a Cisco-proprietary solution, and the Link Aggregation Control Protocol (LACP) is standards based.

Port Aggregation Protocol

To provide automatic EtherChannel configuration and negotiation between switches, Cisco developed the Port Aggregation Protocol (PAgP). PAgP packets are exchanged between switches over EtherChannel-capable ports. Neighbors are identified and port group capabilities are learned and compared with local switch capabilities. Ports that have the same neighbor device ID and port group capability are bundled together as a bidirectional, point-to-point EtherChannel link.

PAgP forms an EtherChannel only on ports that are configured for either identical static VLANs or trunking. PAgP also dynamically modifies parameters of the EtherChannel if one of the bundled ports is modified. For example, if the VLAN, speed, or duplex mode of a port in an established bundle is changed, PAgP changes that parameter for all ports in the bundle.

PAgP can be configured in active mode (desirable), in which a switch actively asks a far-end switch to negotiate an EtherChannel, or in passive mode (auto, the default), in which a switch negotiates an EtherChannel only if the far end initiates it.

Link Aggregation Control Protocol

LACP is a standards-based alternative to PAgP, defined in IEEE 802.3ad (also known as IEEE 802.3 Clause 43, “Link Aggregation”). LACP packets are exchanged between switches over EtherChannel-capable ports. As with PAgP, neighbors are identified and port group capabilities are learned and compared with local switch capabilities. However, LACP also assigns roles to the EtherChannel’s endpoints.

The switch with the lowest system priority (a 2-byte priority value followed by a 6-byte switch MAC address) is allowed to make decisions about what ports actively are participating in the EtherChannel at a given time.

Ports are selected and become active according to their port priority value (a 2-byte priority followed by a 2-byte port number), where a low value indicates a higher priority. A set of up to 16 potential links can be defined for each EtherChannel. Through LACP, a switch selects up to eight of these having the lowest port priorities as active EtherChannel links at any given time. The other links are placed in a standby state and will be enabled in the EtherChannel if one of the active links goes down.

Like PAgP, LACP can be configured in active mode (active), in which a switch actively asks a far-end switch to negotiate an EtherChannel, or in passive mode (passive), in which a switch negotiates an EtherChannel only if the far end initiates it.

EtherChannel Configuration

For each EtherChannel on a switch, you must choose the EtherChannel negotiation protocol and assign individual switch ports to the EtherChannel. Both PAgP- and LACP-negotiated EtherChannels are described in the following sections. You also can configure an EtherChannel to use the on mode, which unconditionally bundles the links. In this case, neither PAgP nor LACP

packets are sent or received.

As ports are configured to be members of an EtherChannel, the switch automatically creates a logical port-channel interface. This interface represents the channel as a whole.

Configuring a PAgP EtherChannel

To configure switch ports for PAgP negotiation (the default), use the following commands:

Switch(config)# interface type mod/num

Switch(config-if)# channel- protocol pagp

Switch(config-if)# channel- group number mode {on | {auto | desirable} [ non- silent]}

On all Cisco IOS–based Catalyst models (2970, 3560, 4500, and 6500), you can select between PAgP and LACP as a channel-negotiation protocol. Older models such as the Catalyst 2950, however, offer only PAgP, so the channel-protocol command is not available. Each interface that will be included in a single EtherChannel bundle must be configured and assigned to the same unique channel group number (1 to 64). Channel negotiation must be set to on (unconditionally channel, no PAgP negotiation), auto (passively listen and wait to be asked), or desirable (actively ask).

TIP IOS-based Catalyst switches do not assign interfaces to predetermined channel groups by default. In fact, the interfaces are not assigned to channel groups until you configure them manually.

This is different from Catalyst OS (CatOS) switches, such as the Catalyst 4000 (Supervisors I and II), 5000, and 6500 (hybrid mode). On those platforms, Ethernet line cards are broken up into default channel groups.

By default, PAgP operates in silent submode with the desirable and auto modes, and allows ports to be added to an EtherChannel even if the other end of the link is silent and never transmits PAgP packets. This might seem to go against the idea of PAgP, in which two endpoints are supposed to negotiate a channel. After all, how can two switches negotiate anything if no PAgP packets are received?

The key is in the phrase “if the other end is silent.” The silent submode listens for any PAgP packets from the far end, looking to negotiate a channel. If none is received, silent submode assumes that a channel should be built anyway, so no more PAgP packets are expected from the far end.

This allows a switch to form an EtherChannel with a device such as a file server or a network analyzer that doesn’t participate in PAgP. In the case of a network analyzer connected to the far end, you also might want to see the PAgP packets generated by the switch, as if you were using a normal PAgP EtherChannel.

If you expect a PAgP-capable switch to be on the far end, you should add the non-silent keyword to the desirable or auto mode. This requires each port to receive PAgP packets before adding them to a channel. If PAgP isn’t heard on an active port, the port remains in the up state, but PAgP reports to the Spanning Tree Protocol (STP) that the port is down.

TIP In practice, you might notice a delay from the time the links in a channel group are connected until the time the channel is formed and data can pass over it. You will encounter this if both switches are using the default PAgP auto mode and silent submode. Each interface waits to be asked to form a channel, and each interface waits and listens before accepting silent channel partners. The silent submode amounts to approximately a 15-second delay.

Even if the two interfaces are using PAgP auto mode, the link will still eventually come up, although not as a channel. You might notice that the total delay before data can pass over the link is actually approximately 45 or 50 seconds. The first 15 seconds are the result of PAgP silent mode waiting to hear inbound PAgP messages, and the final 30 seconds are the result of the STP moving through the listening and learning stages.

As an example of PAgP configuration, suppose that you want a switch to use an EtherChannel load-balancing hash of both source and destination port numbers. A Gigabit EtherChannel (GEC) will be built from interfaces GigabitEthernet 3/1 through 3/4, with the switch actively negotiating a channel. The switch should not wait to listen for silent partners. You can use the following configuration commands to accomplish this:

Switch(config)# port- channel load- balance src- dst- port Switch(config)# interface range gig 3/1 – 4 Switch(config-if)# channel- protocol pagp Switch(config-if)# channel- group 1 mode desirable non- silent Configuring a LACP EtherChannel

To configure switch ports for LACP negotiation, use the following commands:

Switch(config)# lacp system- priority priority

Switch(config)# interface type mod/num

Switch(config-if)# channel- protocol lacp

Switch(config-if)# channel- group number mode {on | passive | active}

Switch(config-if)# lacp port- priority priority

First, the switch should have its LACP system priority defined (1 to 65,535, default 32,768). If desired, one switch should be assigned a lower system priority than the other so that it can make decisions about the EtherChannel’s makeup. Otherwise, both switches will have the same system priority (32,768), and the one with the lower MAC address will become the decision maker.

Each interface included in a single EtherChannel bundle must be assigned to the same unique channel group number (1 to 64). Channel negotiation must be set to on (unconditionally channel, no LACP negotiation), passive (passively listen and wait to be asked), or active (actively ask).

You can configure more interfaces in the channel group number than are allowed to be active in the channel. This prepares extra standby interfaces to replace failed active ones. Use the lacp portpriority command to configure a lower port priority (1 to 65,535, default 32,768) for any interfaces that must be active, and a higher priority for interfaces that might be held in the standby state.

Otherwise, just use the default scenario, in which all ports default to 32,768 and the lower port numbers (in interface number order) are used to select the active ports.

As an example of LACP configuration, suppose that you want to configure a switch to negotiate a Gigabit EtherChannel (GEC) using interfaces GigabitEthernet 2/1 through 2/4 and 3/1 through 3/4. Interfaces GigabitEthernet 2/5 through 2/8 and 3/5 through 3/8 are also available, so these can be used as standby links to replace failed links in the channel. This switch actively should negotiate the channel and should be the decision maker about the channel operation.

You can use the following configuration commands to accomplish this:

Switch(config)# lacp system- priority 1 00 Switch(config)# interface range gig 2/1 – 4 , gig 3/1 – 4 Switch(config-if)# channel- protocol lacp Switch(config-if)# channel- group 1 mode active Switch(config-if)# lacp port- priority 1 00 Switch(config-if)# exit Switch(config)# interface range gig 2/5 – 8 , gig 3/5 – 8 Switch(config-if)# channel- protocol lacp Switch(config-if)# channel- group 1 mode active

Notice that interfaces GigabitEthernet 2/5-8 and 3/5-8 have been left to their default port priorities of 32768. This is higher than the others, which were configured for 100, so they will be held as standby interfaces.

Troubleshooting an EtherChannel

If you find that an EtherChannel is having problems, remember that the whole concept is based on consistent configurations on both ends of the channel. Here are some reminders about EtherChannel operation and interaction:

- EtherChannel on mode does not send or receive PAgP or LACP packets. Therefore, both ends should be set to on mode before the channel can form.

- EtherChannel desirable (PAgP) or active (LACP) mode attempts to ask the far end to bring up a channel. Therefore, the other end must be set to either desirable or auto mode.

- EtherChannel auto (PAgP) or passive (LACP) mode participates in the channel protocol, but only if the far end asks for participation. Therefore, two switches in the auto or passive mode will not form an EtherChannel.

- PAgP desirable and auto modes default to the silent submode, in which no PAgP packets are expected from the far end. If ports are set to nonsilent submode, PAgP packets must be received before a channel will form. First, verify the EtherChannel state with the show etherchannel summary command. Each port in the channel is shown, along with flags indicating the port’s state, as shown in Example 8-1.

Example 8-1 show etherchannel summary Command Output

Switch# show etherchannel summary Flags: D - down P - in port-channel I - stand-alone s - suspended H - Hot-standby (LACP only) R - Layer3 S - Layer2 u - unsuitable for bundling U - in use f - failed to allocate aggregator d - default port Number of channel-groups in use: 1 Number of aggregators: 1 Group Port-channel Protocol Ports ------+-------------+-----------+----------------------------------------------- 1 Po1(SU) PAgP Fa0/41(P) Fa0/42(P) Fa0/43 Fa0/44(P) Fa0/45(P) Fa0/46(P) Fa0/47(P) Fa0/48(P)

The status of the port-channel shows the EtherChannel logical interface as a whole. This should show SU (Layer 2 channel, in use) if the channel is operational. You also can examine the status of each port within the channel. Notice that most of the channel ports have flags (P), indicating that they are active in the port-channel. One port shows because it is physically not connected or down. If a port is connected but not bundled in the channel, it will have an independent, or (I), flag.

You can verify the channel negotiation mode with the show etherchannel port command, as shown in Example 8-2. The local switch is shown using desirable mode with PAgP (Desirable-Sl is desirable silent mode). Notice that you also can see the far end’s negotiation mode under the Partner Flags heading, as A, or auto mode

Example 8-2 show etherchannel port Command Output

Switch# show etherchannel port Channel-group listing: ----------------------- Group: 1 ---------- Ports in the group: ------------------- Port: Fa0/41 ------------ Port state = Up Mstr In-Bndl Channel group = 1 Mode = Desirable-Sl Gcchange = 0 Port-channel = Po1 GC = 0x00010001 Pseudo port-channel = Po1 Port index = 0 Load = 0x00 Protocol = PAgP Flags: S - Device is sending Slow hello. C - Device is in Consistent state. A - Device is in Auto mode. P - Device learns on physical port. d - PAgP is down. Timers: H - Hello timer is running. Q - Quit timer is running. S - Switching timer is running. I - Interface timer is running. Local information: Hello Partner PAgP Learning Group Port Flags State Timers Interval Count Priority Method Ifindex Fa0/41 SC U6/S7 H 30s 1 128 Any 55 Partner’s information: Partner Partner Partner Partner Group Port Name Device ID Port Age Flags Cap. Fa0/41 FarEnd 00d0.5849.4100 3/1 19s SAC 11 Age of the port in the current state: 00d:08h:05m:28s

Within a switch, an EtherChannel cannot form unless each of the component or member ports is configured consistently. Each must have the same switch mode (access or trunk), native VLAN, trunked VLANs, port speed, port duplex mode, and so on.

You can display a port’s configuration by looking at the show running-config interface type mod/ num output. Also, the show interface type mod/num etherchannel shows all active EtherChannel parameters for a single port. If you configure a port inconsistently with others for an EtherChannel, you see error messages from the switch.

Some messages from the switch might look like errors but are part of the normal EtherChannel process. For example, as a new port is configured as a member of an existing EtherChannel, you might see this message:

4d00h: %EC-5-L3DONTBNDL2: FastEthernet0/2 suspended: incompatible partner port with FastEthernet0/1

When the port first is added to the EtherChannel, it is incompatible because the STP runs on the channel and the new port. After STP takes the new port through its progression of states, the port automatically is added into the EtherChannel.

Other messages do indicate a port-compatibility error. In these cases, the cause of the error is shown. For example, the following message tells that FastEthernet0/3 has a different duplex mode than the other ports in the EtherChannel: 4d00h:

%EC-5-CANNOT_BUNDLE2: FastEthernet0/3 is not compatible with FastEthernet0/1 and will be suspended (duplex of Fa0/3 is full, Fa0/1 is half)

Finally, you can verify the EtherChannel load-balancing or hashing algorithm with the show etherchannel load-balance command. Remember that the switches on either end of an EtherChannel can have different load-balancing methods. The only drawback to this is that the load balancing will be asymmetric in the two directions across the channel.

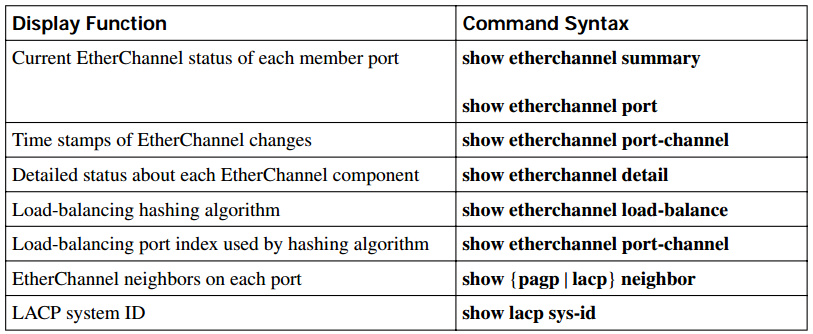

Table 8-4 lists the commands useful for verifying or troubleshooting EtherChannel operation.

Table 8-4 EtherChannel Troubleshooting Commands